Expression

4 November 2022

Machining the Dream

Yudhanjaya Wijeratne

Share

As a sci-fi novelist and data analyst, Yudhanjaya Wijeratne is a keen user of the tools technology keeps making available to us. He charts the rise of art made by AIs and seeks to demystify the hype surrounding the headlines.

I am 14 years old.

I have broken my hand.

As it turns out, motorbikes and concrete walls make a near-lethal combination. My wrist has shattered. Several things that were inside are on the outside, and whatever is left of my palm is somewhere in the middle.

I am told that with years of physiotherapy I might recover the use of my hand, but it would never operate the same way again.

So I turn to the machine. Microsoft Word and Paint become my friends. I learn how to chicken-peck my thoughts onto virtual paper. I learn how to draw again, pixel-by-pixel, click-by-click. This infuriates my school teachers no end; they insist that real writing be on paper, and that real art is done with brush, oil and canvas.

Psychedelic Thor on a winged vespa scooter over a hellscape in the style of Picasso by DALLE-2

I am 27 years old.

I have come to an understanding of myself as a sort of cyborg; a tool-user in a long line of tool-users. I speak in English to people, in Python, R, Perl and clicks and clacks to the machine.

I publish a short story. In a dystopian future, there are no human writers anymore - instead, ShakespeareBot 2.0 dominates every bestseller chart, as does HemminwayAi.

But in 2019, I am contemplating a novel involving a machine poet and obsessing over translations of Tang Dynasty poetry. I know that this poet has to sound like a machine. In a fit of casual experimentation, I retrain OpenAI’s GPT2 to write poetry.

The idea is not unique. The concept of text generation has been around on the machine-learning side of computer science as far back as Goldman’s PhD thesis at Stanford from 2000. The idea of using machine-like processes is even older, stretching back to the 13th century’s Ars Magna and 1920s Dadaism to David Bowie himself. Every data scientist worth their salt can string together a Markov Chain - a technique that uses mathematics pioneered in the early 19th century - to generate any amount of nonsense words that feel like infinite monkeys making a go at producing some Shakespeare. The 1964 ELIZA chatbot made humans believe that they were talking to a human therapist, and Dwarf Fortress can generate tens of thousands of years of history for its fictional worlds.

What has changed is that the technology is sophisticated enough to be almost as competent as human beings. When asked to write the (now-famous) report about scientists finding unicorns, it produces a story that could feasibly be BBC reporting. When shown poetry and asked to make more, it does so.

As with the procedural generation of video games as with layers, and alpha channels, and all these helpful tools that exist in virtual space, it turns out that AI can be used to make art: its label at that point ceases to matter.

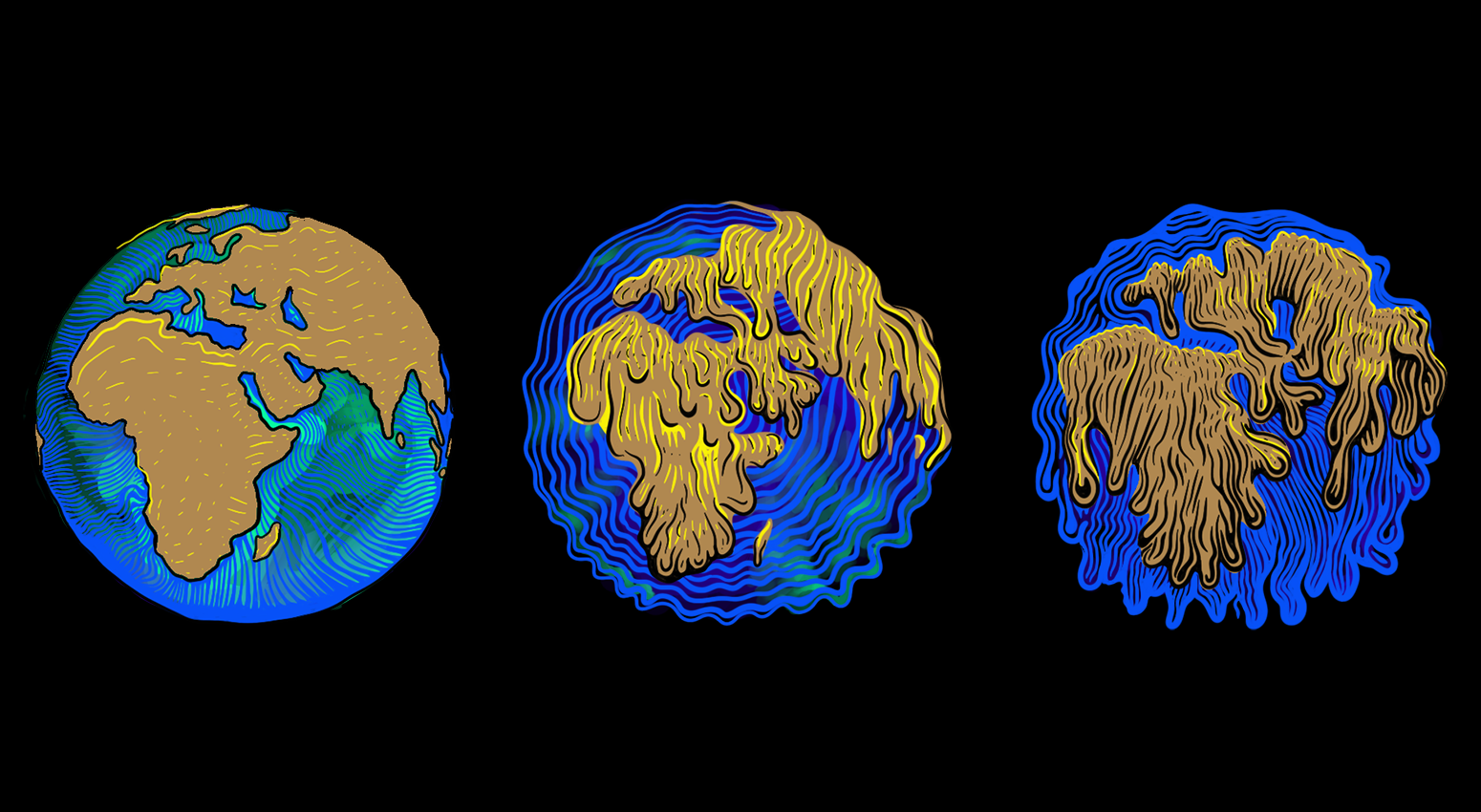

Recent advances have led to an explosion in AI-generated art. Key among the gene pools are DALLE-2, Midjourney and Stable Diffusion. Think of them as brains frozen at a particular stage of development. The idea is, again, not unique. The popular genesis of this type of work can be traced back to Google’s nightmare-inducing DeepDream. Like a human being, it can mimic style and even the rough form.

Batman as Monalisa in pop art roy Lichtenstein painting at the Louvre by DALLE-2

The technology moves fast. Most models can take images as input; this means they can iterate on an image, using Ao to generate anything from Disney-esque images of Nintendo’s Princess Peach to surreal fairytale videos where images bleed into one another.

As far as applications go, Midjourney comes first, promising high-quality art for a meagre subscription fee. Then comes Stable Diffusion, for free.

These AI models casually appear in Canva ー a widely-used graphic design platform for drawing and presentations. Someone builds a plugin for Photoshop; and so on. It seems that overnight, everyone has a master artist on demand.

Some artists accuse AI of copying work, assuming that it works as a mosaic-maker. Some accuse the AI of theft of style; the idea that, in observing millions of artworks, it is intentionally designed to replace human artists who work in said fashion. Others accuse it of being illegal on the grounds that the AI was made using data obtained without the consent of many of the subjects or artists involved in the original work.

A rusting mechbot cyborg with exposed flesh and skeletal sections and wires holding a flower by DALLE-2

These artificial minds have their own strange grammar, but they operate by creating noise ー a sort of primordial soup of the data ー and extracting features from it that match the concepts we ask for. However, there’s no denying that it feels bad. Much of the art used to train such AI models come from websites designed long before it became feasible to bulk-download their art for machine learning, and from artists who never consented for their work to be used in this way. The argument about consent is legally hazy. Scraping, or the process of using bots to extract content and data from a website, is legal; disable it, and Google ceases to exist. The argument about style is also hazy ー a dubious claim is that learning by observation is illegal.

The profession of artists and writers has always been in what Nassim Nicholas Taleb terms in his book The Black Swan (2007), ‘Extremistan’ ー a world where 1 percent takes home 99 percent of the reward. The 99 percent then fights over the scraps. And now it seems we must reckon with a machine reducing the field even further.

Of the cabal of people who make machines, some focus on extending human ability and some on replicating it. As of the 21st century, these lines are blurred because new tools and technologies quickly become a part of ‘our’ abilities. For example, Photoshop and digital art websites are not naturally occurring phenomena, yet many artists exist today because these tools exist.

Machines take the bottom end of a value chain and automate it, putting complex human skill sets out of use or democratising them, depending on which side of obsoletion you’re standing on.

Batman on holiday in Van Gogh impressionist painting by DALLE-2

The current controversy around AI-generated art is largely because of Stable Diffusion, an open-source model that does what OpenAI, DALL-E and Google Imagen do ー use text commands to generate art. The difference is that DALL-E and Imagen kept their ingredients behind closed doors and corporate paywalls; Stable Diffusion dumped everything on the public for free.

The sheer publicity of these events enabled two things: first, a wider range of artists came to understand how their works were being used, and second, to let people build things on top of these open-source models. In just weeks, it seems, we have seen AI-generated motion capture; AI-powered programming; AI-generated 3D models of brains built from MRIs; AI-powered virtual assistants; even AI-generated video clips. Legions of human tasks have been made easier; what once took a hundred people might take just one person and a computer.

I suspect that the current tidal wave of fear stems from the fact that the machine is too close to us, too fast, and far too competent. Consider that Kevin Hess, an AI-wielding artist, made a stunning 706-page graphic novel of philosopher Olaf Stapledon’s sci-fi novel Star Maker (1937) in around 100 hours. At just over eight minutes a page, Hess and his AI of choice are doing the work of multiple artists and typographers. Skill sets that many have laboured over for years have been rendered not obsolete, but so easy to access that it might as well be. This is the more practical argument: that artists who scrape by on a handful of commissions will vanish. Or as Jason M Allen, who won an art prize using an AI, said to the New York Times: ‘Art is dead.’

Another good place to look is the Moog synthesiser, a staple of 1970s prog-rock, built by Robert Moog. Like Stable Diffusion, it wasn’t particularly new technology, but it was put together in a way that made the technology usable and affordable. It also put many session musicians out of work. Today, much of what we call music exists not despite the synthesiser, but because of it. What if fear of the machine at the time put the Moog out of production?

Furthermore, there is a notion that AI development is somehow altruistic. The current aesthetic of the AI movement is not ‘mad-scientist-out-to-dazzle-world.’ It is very much a corporate endeavour, very Silicon Valley-venture-capitalist in outlook. Its message to those it replaces is: ‘This is going to be an app soon.’ Robert Moog adopted a much kinder approach, working first with composers and musicians to build custom devices and add features to his synthesiser. But what we face now is a wave of killer apps, as Sequoia Capital puts it. The argument is couched more in terms like ‘potential to generate trillions of dollars of economic value’ than 'Hey, look at this cool thing I made!' The latter attitude was strongest in the AI world circa 2012. The talent building these AIs also believes in the Silicon Valley formula - build, monetise, IPO, repeat.

Gandhi on a Harley Davidson in the style of Robert Crumb by DALLE-2

It’s easy to react to the aesthetics, and this is a prime trigger for artists, for aesthetics are a large part of their work. On the consumer end, we are facing waves of apps that will take our words and turn them into pictures while we sip our tea.

Much of this art is excellent. From film to video games, the quality of indie productions will rise. A new generation of artists will get a massive lever to play with: for example, Stable Diffusion has now found its way into Photoshop, allowing you to imagine what the Mona Lisa might look like had Da Vinci kept tinkering with it. Likewise, indie game developers can generate concept art and 3D assets and produce a much more polished rendering of that dream game to work with.

Unsurprisingly, much of this progress will put money into corporate coffers as savvy marketing departments start cutting down on human commissions and tell an intern to play with an app instead. Much of this will raise the bar to entry for new artists, who will struggle to build a name for themselves in the face of a global machine that can produce commissions in seconds.

We’re essentially in that synthesiser moment again. The rather Extremistan game of being a writer or an artist continues. The tools however have changed and we must adapt.

And I have no doubt that we will.

Yudhanjaya Wijeratne is a Sri Lankan science fiction author, activist and researcher. His work has appeared in Wired, Foreign Policy and Slate. His novel ‘The Salvage Crew’ was lauded as one of the best science fiction and fantasy books of 2020.

Banner Illustration Credit: Green Chromide

expression

Enjoy Your Freedom Outside

culture

From Peace to Protest

culture

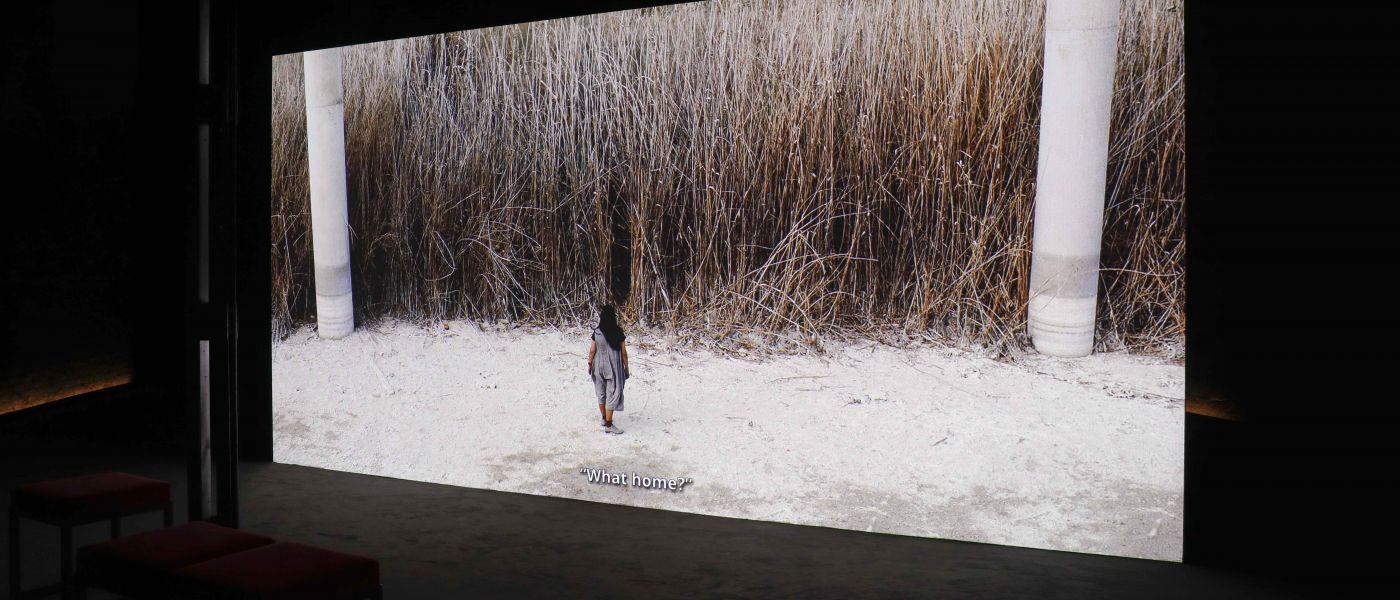

Homecoming | A Space For You

culture

In Her Country

culture

Spoons Out of Water

expression

Precarious Existence

culture

Roaming

expression

Abandoned: When a Crisis Allows Nature Back In

culture

An Outlook on Change

culture

Hybrid senses - Slow Art Tour

opinion

Humanising Cities

opinion

What is the role of the artist in society?

culture

Hassan Hajjaj: Carte Blanche

culture

Soothing the Soothsayers

culture

Humanity as Refuge I

culture

Humanity as Refuge II

culture

A Force To Reckon With: Manal Aldowayan

culture

Alserkal Avenue | The First Decade (Part 2)

culture

Alserkal Avenue | The First Decade (Part 1)

culture

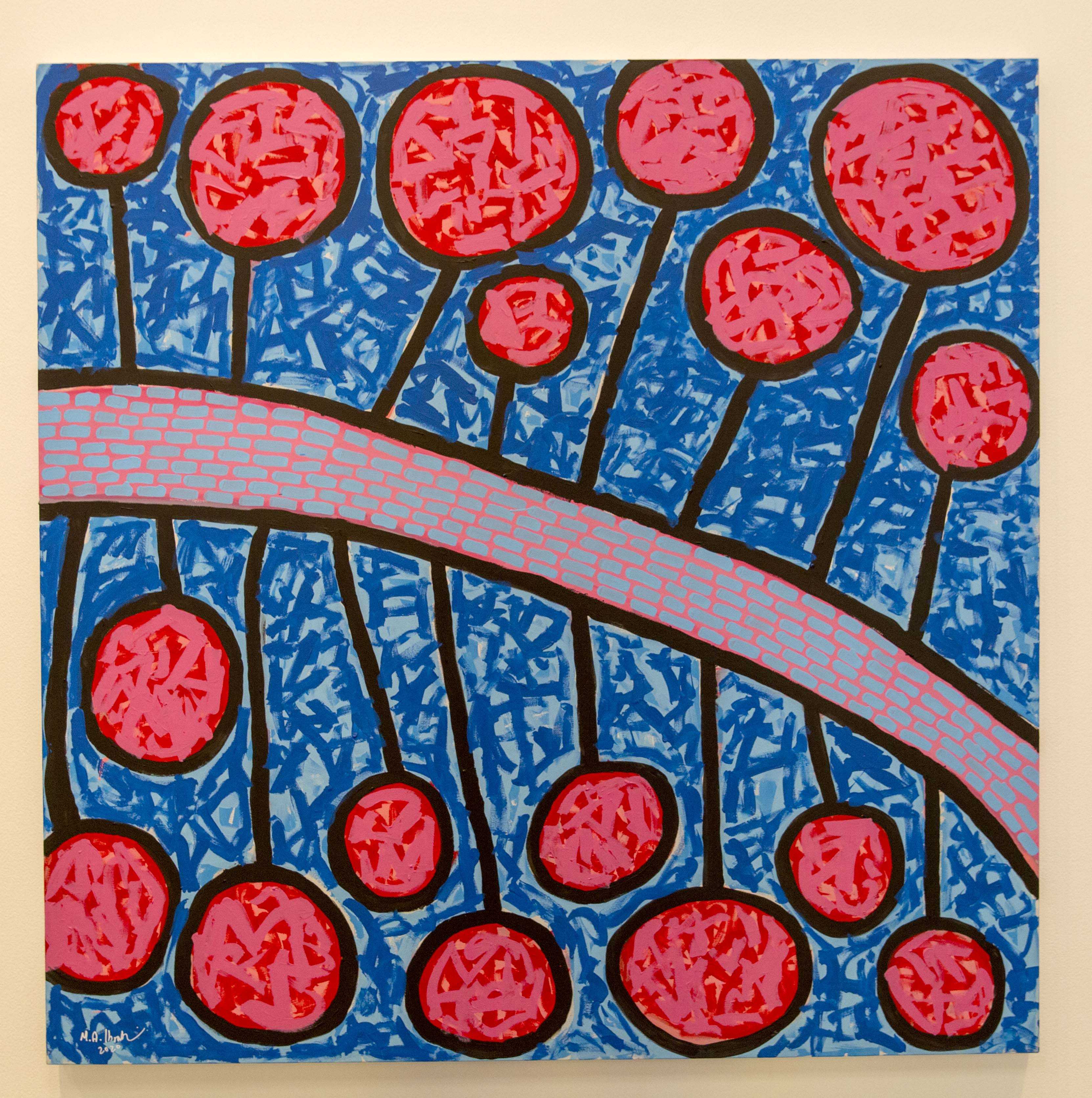

Turning The Spotlight On UAE-Based Emerging Artists

culture

Architecture Meets Nature: While We Wait

culture

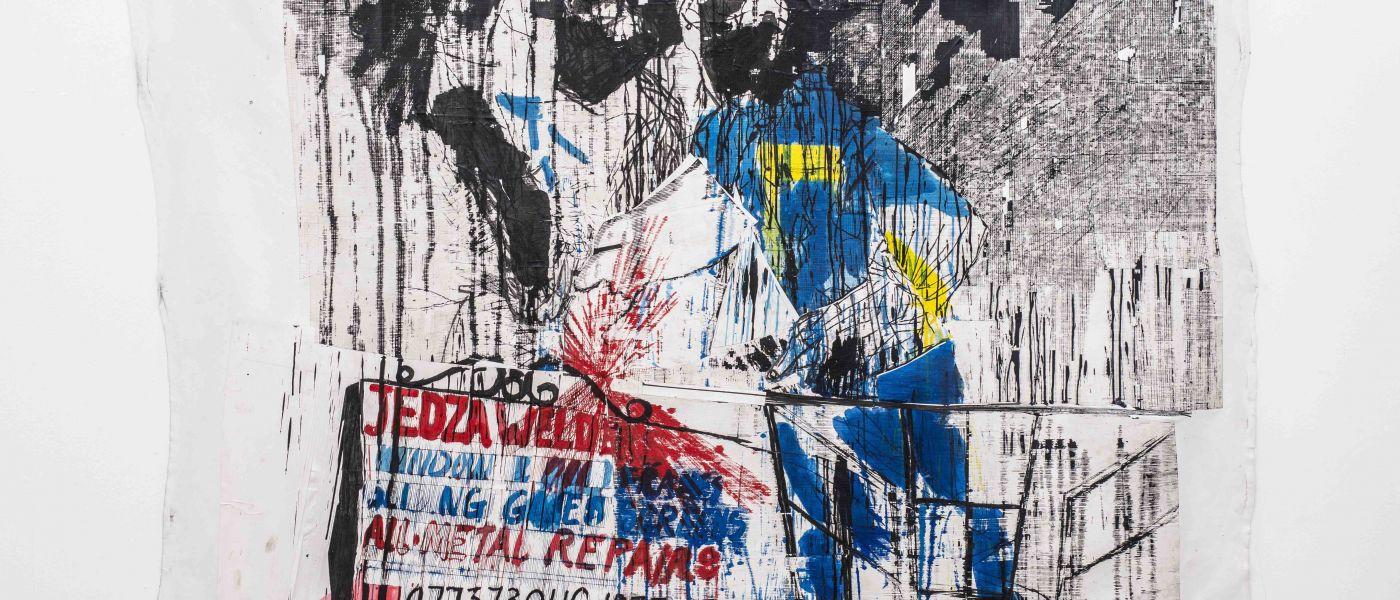

Burning Issues

expression

When Solidarity Is Not a Metaphor

expression

A closer look with Azza Al Qubaisi

expression

A closer look with Nathaniel Rackowe

expression

A closer look with Kais Salman

expression

A closer look with Sarah Almehairi

culture

Imploded, burned, turned to ash

culture

Sneak peak of An Outlook on Change

culture

Concrete Closed Sessions | Nujoom Alghanem

culture

JAFR. The Alchemy of Signs by Nja Mahdaoui | Elmarsa Gallery

culture

Sneak peak of Burning Issues

culture

Vikram Divecha's "El dorado"

culture

Cultures in Conversation | Openness and the Path to Prosperity

expression

The Alphabetics of the Barista Part II

expression

A Poem, A Garden

opinion

Sneak peak of Humanising Cities

culture

Cultures in Conversation | What Makes a City: Dimensions of Culture and Possibility of Community

expression

Alserkal Insider | Nightjar Coffee Roasters with Leon Surynt

culture

Cultures in Conversation | Never Be Lost: Learn to Read the Stars

culture

Cultures in Conversation | Climate change in the classroom, living room, street and beyond

expression

Concrete Closed Sessions: Danabelle Gutierrez and Charlie119

culture

Echo Holdings x Synthanatos

culture

Dayanita Singh in Conversation

culture

Noria: Circulation Of People In Systems

culture

When the Band Comes Marching In

culture

Adapt to Survive: Notes from the Future

culture

"Under": A Video Documentation

culture

While We Wait

culture

Safina Radio Project: Venice

culture

Super Fence

culture

Cultural Consulting

culture

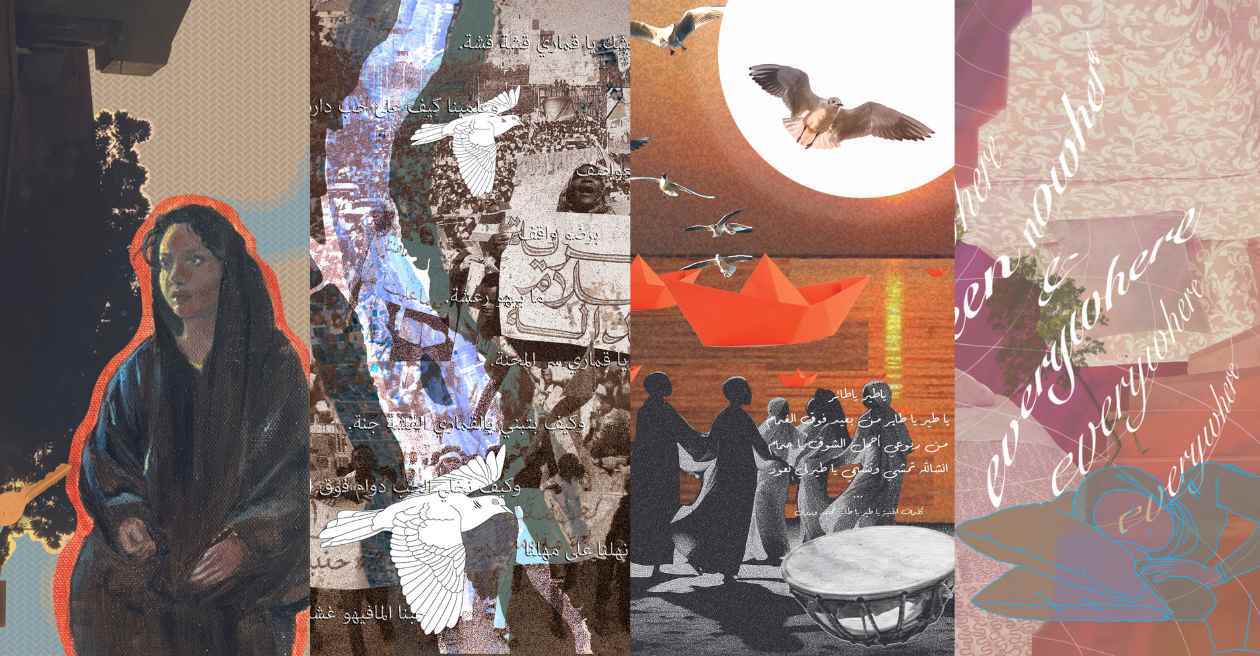

Resonance / رنين الرِّياح

culture

On Translucency

culture

Deliberate Pauses / وقفات متروية

culture

Research Rooms

culture

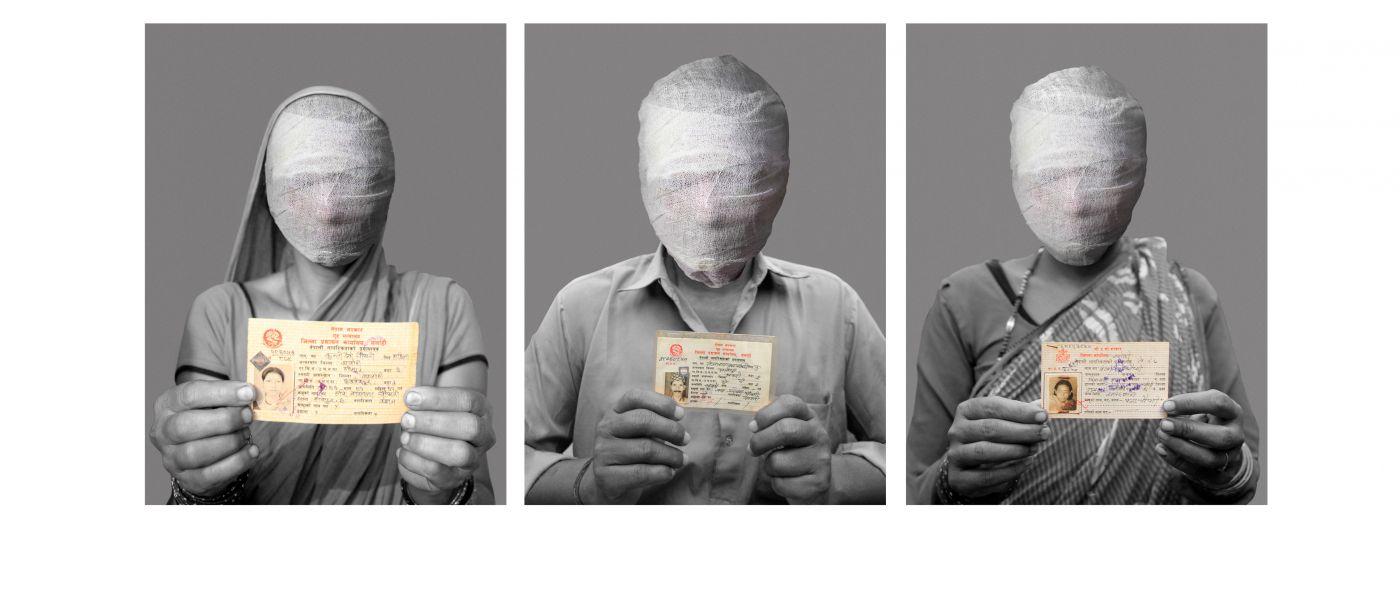

Nepal Picture Library

culture

Zora Snake

culture

Dima Srouji and Jasbir Puar

expression

The Greening Story

culture

Nahil Bishara’s Jerusalem

culture

Abu Fadi

culture

Fathi Ghabin: A Self-Portrait of the Working-Class

culture

On This Land

culture

The Age of Multi-Crises

expression

Quoz Arts Fest

expression

Drawing a Shifting Landscape

culture

Rewilding the Kitchen

expression

Radical Podcast x Alserkal Avenue Mini Series

expression

Alserkal Spotlight: Radical Contemporary Podcast

Haroon Mirza: Deciphering Nuance

expression

From the Archive | Spring 2023 Residency

A Feral Commons

The Global Co-Commission

opinion

What We're Listening To

Global Co-commission: 2022 - 2024

culture

Indie Publishers III Women Powered Platforms

expression

Making History: A Study of Archives

expression

Adverse Poetries

culture

Letter from Hollywood: How RRR Redefined Global Pop

expression

An Orchestration of Magic

Beyond the Measure of Time

expression

The Tree School Chronicles

expression

The Street Came First

culture

The Myth about Maths

culture

Ink, Paper, Alchemy II

opinion

Turn On, Tune In

expression

Saint Levant: Home-maker

culture

What did we gain at COP27?

expression

Fahd Burki and Ala Ebtekar Take to the Skies

culture

Arab Cinema in One Week

culture

Mud, Minarets, and Meaningless Events | A research convening

culture

Voice Notes from Venice

culture

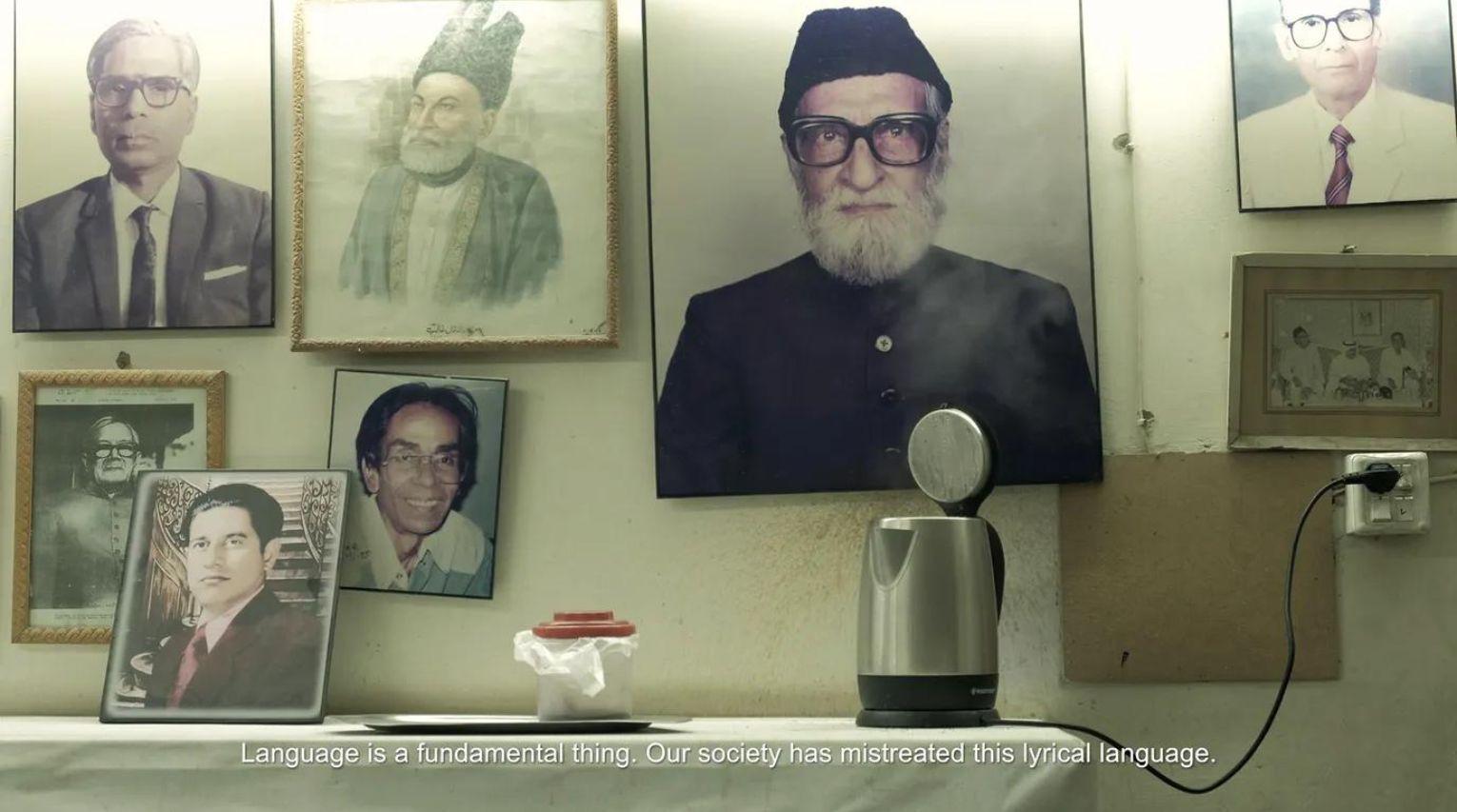

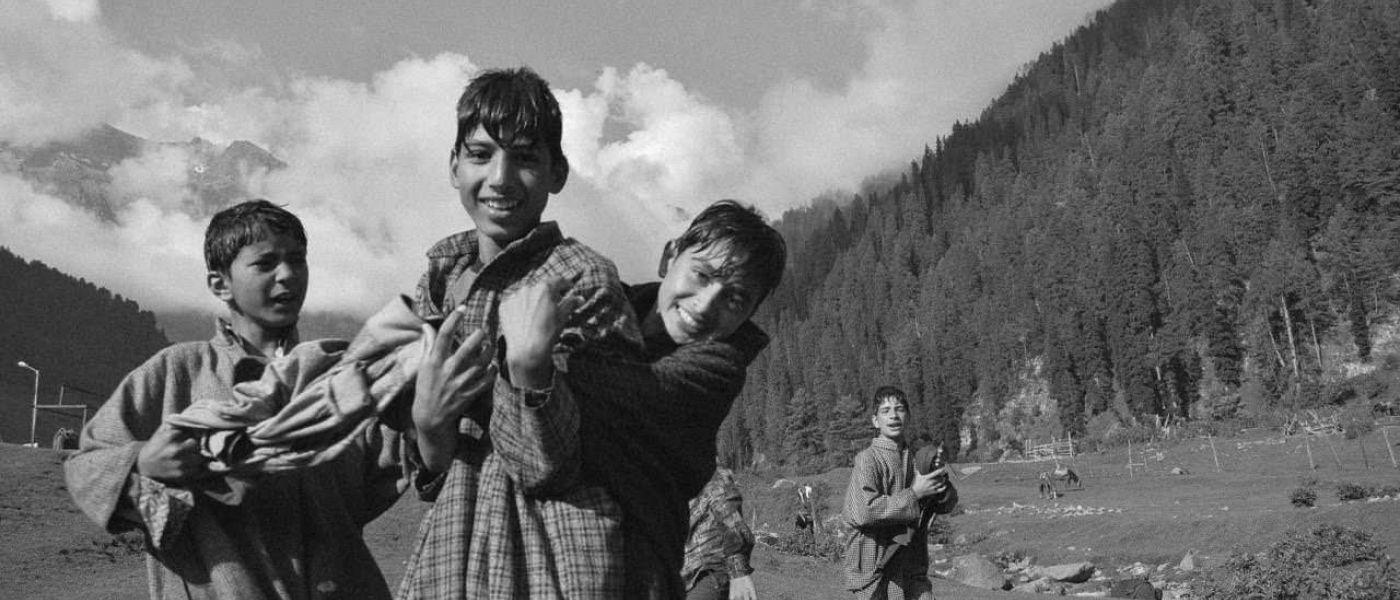

The Poetics of Partition

culture

A Reality Check for Indian Love

opinion

Resistance is futile: how I learned to appreciate the e-scooter

culture

The Technological Body

expression

Cultures in Conversation by Alserkal Advisory

culture

A Tour through A Supplementary Country Called Cinema

culture

Rewilding the Kitchen | Joori Wa Loomi by Moza AlMatrooshi

opinion

On Tolerance

culture

Layer upon Layer

culture

A Walk through ICD Brookfield

culture

Earth to Humans

culture

Overheard at WCCE

opinion

Why I Don’t Blame Institutions Anymore

expression

Open Studios: Still Lives

culture

An Incomplete History of Cinema, Part 3

culture

Hair Mapping Body; Body Mapping Land

expression

Cultures in Conversation Blog

culture

Rewilding the Kitchen | Mastic Fizz by Salma Serry

style

Who Owns Yoga?

expression

The Tower by Wilf Speller

culture

The Suffering Body

culture

August Observations

culture

Rewilding the Kitchen | Recipe No. 1 | Barri by Namliyeh

culture

Cultures in Conversation at Expo 2020

culture

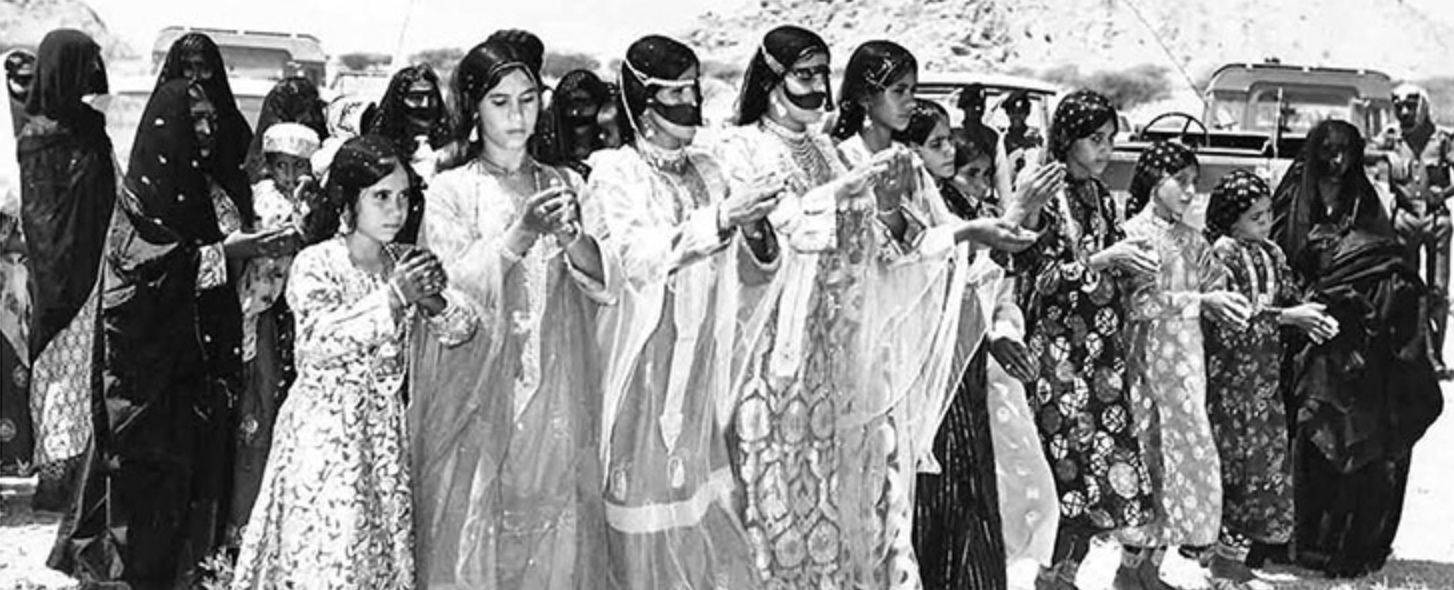

On Emirati Women

culture

The Alserkal Ecology Reader | Three Lectures on Architecture and Landscape in the Gulf

expression

Three Conversation Pieces III

culture

An Incomplete History of UAE Cinemas, Part 2

opinion

Design as a Wrapper

opinion

Engaging Audiences

expression

Three Conversation Pieces II

expression

Three Conversation Pieces I

culture

The Overseas Filipino Artist

culture

An Incomplete History of UAE Cinemas, Part 1

expression

Drone Go Chasing Waterfalls

expression

A Letter

opinion

Will the Fashion Industry Ever Truly Be Sustainable?

culture

How Will We Return?

culture

Mohamed Melehi And The Casablanca Art School Archives

expression

An Introductory Curriculum for Reparations

culture

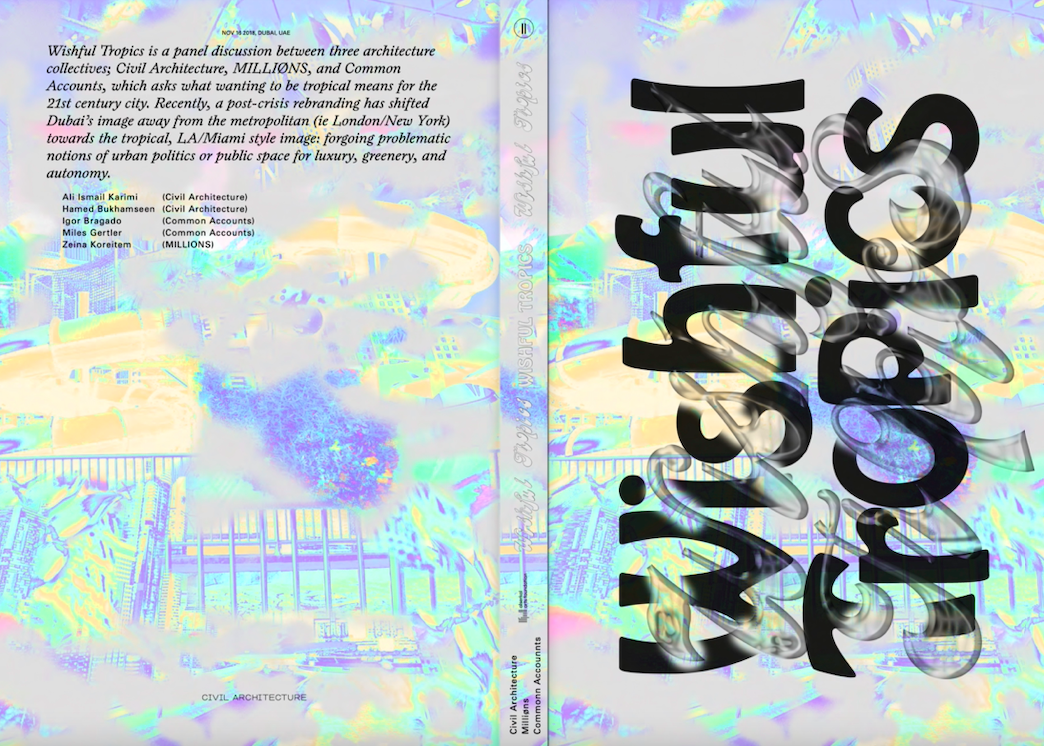

METASITU in conversation with Ghada Yaiche

culture

Cape Town: A New Capital for Art

opinion

The Lighthouse Podcast x Vilma Jurkute

culture

Connecting Cultures Through Contemporary Art

culture

One-on-One with Nabila Abdel Nabi

culture

The Making of a Ruin

culture

Mystical Warriors

culture

Is This Tomorrow?

culture

Slippery Modernism

culture

Is This Tomorrow? Art vs Architecture

culture

Living Under The Net

style

At the Confluence of Art and Industry

culture

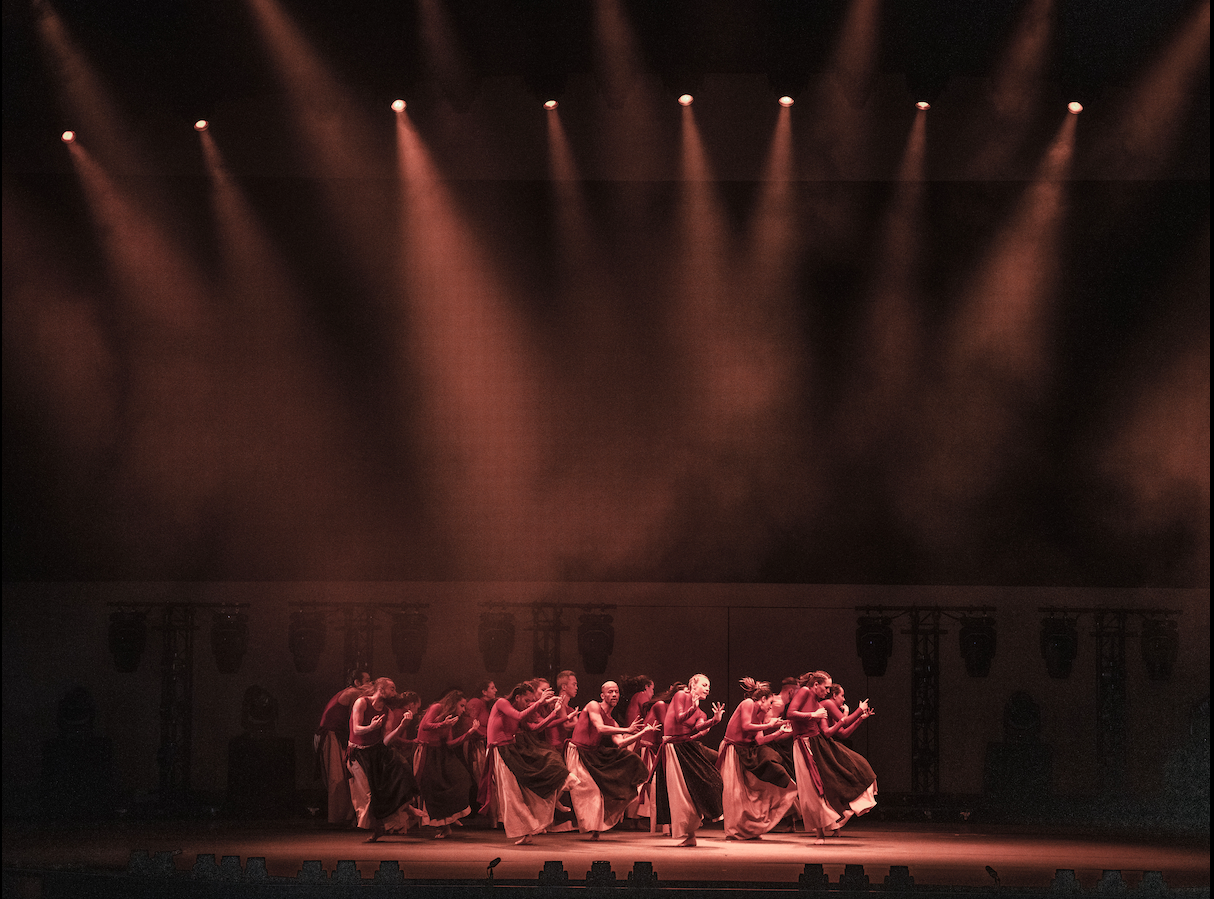

Poetry In Motion

culture

Collaborative Co-existence

culture

An Artistic Meditation

culture

Fabric(ated) Fractures

culture

The Africa Connection

culture

The Fabric of Fractures

culture

Chaos, Love, and Enigmas

culture

A Modern History

culture

Hydrogen Helium

culture

Q&A: Hale Tenger And Mari Spirito

culture

Re-Examining The Role Of The Museum In Society